- Who We Are

- Updates & News

- Standards

- Software Tools

- Network Studies

- Community Forums

- Education

- New To OHDSI?

- Community Calls

- Past Events

- Workgroups

- Tutorials

- 2025 ‘Our Journey’ Annual Report

- Current Events

- Support & Sponsorship

- 2025 Global Symposium

- 2026 Europe Symposium

- 2026 Global Symposium

- Github

- YouTube

- X/Twitter

- Newsletters

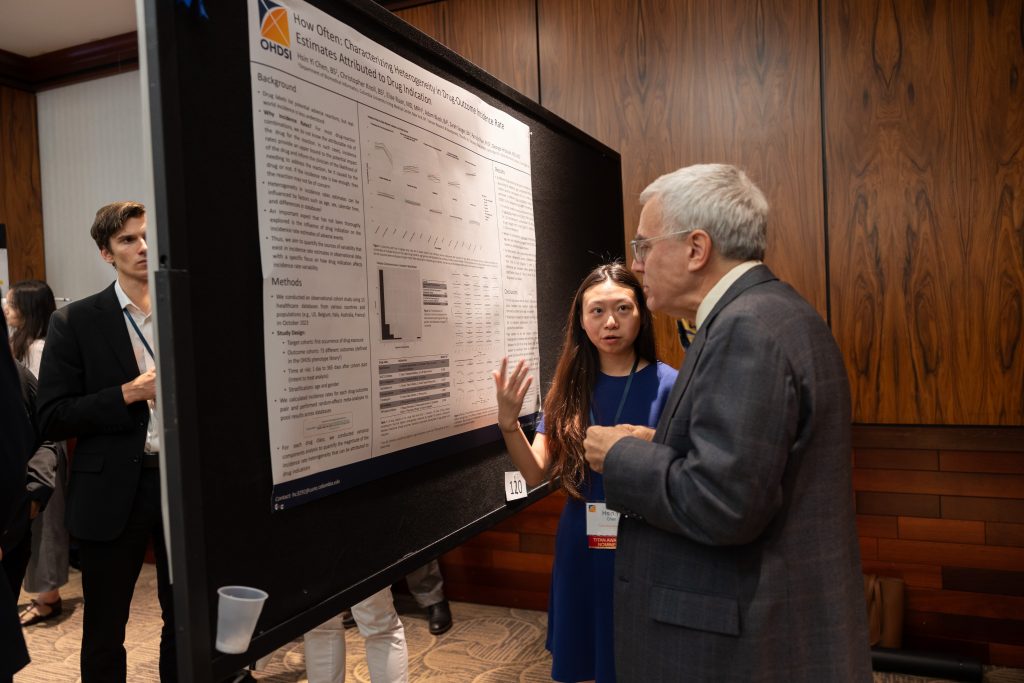

Collaborator Spotlight: Hsin Yi "Cindy" Chen

Hsin Yi “Cindy” Chen is a fifth-year MD-PhD candidate at Columbia University, where she works under the mentorship of Dr. George Hripcsak in the Department of Biomedical Informatics. After earning her B.S. in biometry and statistics from Cornell University, she spent two years as a research assistant at Yale Neurology, building predictive models for neurocritical care. Her current research focuses on improving methods for large-scale observational data analysis, specifically investigating how to leverage data to reach causal conclusions and address complexities like censoring bias in high-dimensional datasets.

Within the OHDSI community, Cindy is an active member of the LEGEND initiative, the Methods workgroup, and the Eye Care and Vision Research workgroup. Her significant contributions to the field were recognized at the 2025 OHDSI Global Symposium, where she was a featured speaker and received the Best Community Contribution Award in Clinical Applications for her talk on the “Heterogeneity of Treatment Effects in Type 2 Diabetes Mellitus”.

In the latest collaborator spotlight, Cindy discusses how her clinical training impacts her approach to research, how OHDSI has impacted her PhD journey, how network studies can build trust in science, and more.

Can you tell us about your background and the research questions that most excite you right now?

I am currently a fifth-year MD-PhD student at Columbia (finished 2 years of medical school and now in the third year of my PhD) working with Dr. George Hripcsak. Prior to this, I got my B.S. in biometry & statistics at Cornell, and then I spent 2 years at Yale Neurology as a research assistant working on building predictive models using multi-modal data for neurocritical care.

I always jokingly say that the research questions that excite me most right now are the ones that my advisor is also most excited about right now! That isn’t untrue. In fact, I joined George’s lab because I am interested in ways to improve methods for large-scale observational data analysis. When you’re in the beginning stages of studying statistics, you are always told “correlation is not causation”—but many of the questions we are interested in answering in medicine are about causation. So how can we leverage the data that we have to get closer to a causal conclusion (keeping in mind that randomized controlled trials are not always possible)? How can we make the conclusions that we draw more reproducible and reliable? As part of my OHDSI work, I am currently interested in censoring bias. We lose follow up for folks in electronic health data all the time, for all sorts of different reasons (people move, people decide to switch doctors, people just decide to not show up anymore), so how does that affect our analysis? It seems like, theoretically, we know what happens and how to fix it. But in the large scale and high dimensional data that we tend to use in OHDSI, it becomes more complex, and there is a lot of work we can do there.

As a MD-PhD trainee, how does your clinical training shape the way you approach biomedical informatics research, and how does your informatics training influence how you think about patient care?

As a MD-PhD trainee, how does your clinical training shape the way you approach biomedical informatics research, and how does your informatics training influence how you think about patient care?

This is an interesting question because I was just talking to my program director about how I wish there was more clinical exposure prior to me starting my PhD. At Columbia, we finish 18 months of pre-clinicals (lecture-style, learn about medicine), take our first set of boards (USMLE STEP 1), and do 2 clinical rotations before starting the PhD. Other schools do it differently (some do the rotations entirely after, some do boards after, etc.), and I’m glad I got to have a couple months of exposure to clinical medicine outside of the classroom, but it really did not feel like it was enough for it to fully inform my informatics research. That said, I think even that brief exposure to clinical medicine has shaped my perspective on how generative AI will be a part of medicine (as it is a hot topic in informatics right now and ChatGPT came out around the time I started my PhD). Compared to many of my peers, I think I’m more jaded already and I am more aware of its inherent limitations and how it can harm rather than help patients.

You are pursuing your MD-PhD at Columbia University, the coordinating center for OHDSI. How has being embedded in the OHDSI ecosystem influenced or empowered your research and training journey?

Being a part of the OHDSI community has been the highlight of my PhD thus far. In the community, I’m involved with the LEGEND initiative, the Methods working group, and most recently, I joined the Eye Care and Vision Research workgroup. The people I’ve met through OHDSI are helpful and kind, and I have also had the opportunity to work with some incredible researchers who I really look up to (like Marc Suchard and Martijn Schuemie!). I have also taken advantage of the available tools that folks in our community have spent so much time and energy developing—think Atlas, Cohort Incidence, Cohort Method, Strategus, Cyclops, etc.—and they’ve all made my workflow a thousand times better. Looking forward, I would love to make my “own” mark on the OHDSI community, maybe by contributing some knowledge about a method, or lead a large network study!

Your 2025 Global Symposium talk on Heterogeneity of Treatment Effects in Type 2 Diabetes Mellitus received the Best Community Contribution Award in Clinical Applications. What were the most important insights from that work, and why do they matter clinically?

Your 2025 Global Symposium talk on Heterogeneity of Treatment Effects in Type 2 Diabetes Mellitus received the Best Community Contribution Award in Clinical Applications. What were the most important insights from that work, and why do they matter clinically?

One of the most important insights from this work is that just calculating average treatment effects (difference between two drugs) can mask meaningful differences in how patients with type 2 diabetes respond to therapy. For example, we observed that biguanides, compared with DPP-4 inhibitors, were associated with greater cardiovascular risk reduction among patients with hyperlipidemia and obesity. We also observed that SGLT-2 inhibitors, compared with DPP-4 inhibitors, were associated with reduced stroke risk primarily among non-obese patients.

Clinically, these findings matter because treatment selection in type 2 diabetes is increasingly complex. Over the past decade, treatment options for type 2 diabetes have expanded, and physicians can now choose among multiple drug classes with different cardiovascular and safety profiles. Our work suggests that routinely available patient characteristics could help guide therapy selection toward a more personalized approach. Though not a focus of this study, I would be really interested in knowing more about genetic markers and how that might play into this heterogeneity of treatment effect as well. Ultimately, this type of evidence can support more tailored clinical decision-making and help us move towards personalized medicine, which I’m excited about.

One of the Symposium themes was “improving trust in science.” From your perspective, how do large-scale, open, network-based studies—like those conducted in OHDSI—help build more trustworthy clinical evidence?

I feel like the concept of chasing a significant p-value is something that has been plaguing science. It sometimes seems like the inherent value of science is whether you’ve managed to get your p-value to be <0.05. It’s better to be right rather than significant in my opinion. Why would you want a significant result that’s a type I error? It’s more important to me that we chase the correct answer (yes, I understand that we don’t know what is correct many times in real world data), or at the very least, have confidence intervals that reflect the uncertainty we have around an estimate.

This is what I appreciate about OHDSI network studies and OHDSI’s LEGEND principles. We (1) always define (via a protocol) the analysis we are doing, and (2) we do all analysis, and we report all analysis—regardless of whether a comparison is significant or not. I also think OHDSI has done a lot of work in developing a set of diagnostics and methods to help mitigate some of the bias and lack of trust in observational studies, including covariate balance, MDRR, and negative control outcome calibration. And the best part is that all these tools are available for the public to use. I think this type of transparency and reproducibility is a step in the right direction for building more trustworthy clinical evidence.

Looking ahead 10 years, how do you hope the work you’re doing now will change the way clinicians make decisions for individual patients?

Looking ahead 10 years, how do you hope the work you’re doing now will change the way clinicians make decisions for individual patients?

What a loaded question! I think science (and of course, medicine) is a team sport so I am not sure if I think any individual piece of work of mine will end up single-handedly changing clinical decision making—but I really do hope I can contribute meaningfully to our growing body of clinical evidence. I think what I am most excited about looking forward would be to be able to influence more clinicians to take the OHDSI methods and principles and apply them to their observational studies! I think more data partners and more robust clinical evidence generation can benefit us all.

What are some of your hobbies, and what is one interesting thing that most community members might not know about you?

Some of my hobbies include language learning, playing the piano, and fiber arts (though with varying levels of commitment depending on my mood). I’ve been working on a cross stitch lately, and I just started reading The Friend by Sigrid Nunez.

Something you might not know about me is that I stress bake. Right before my PhD qualifying exam, I was baking sourdough loaves, cookies, and my cheddar-chive savory scones (a crowd favorite!) all the time. I don’t identify as a baker per se, but I have been told that I’ve honed that skill through being stressed in med school and the PhD journey. Anyway, if anyone wants a super robust sourdough starter, hit me up! (I’m serious, I’ll bring it to you at the next symposium).